How does ELAO calculate its precise quarter-level score for universities and professionals?

When it comes to language assessment, most tools stop at broad level labels: A2, B1, B2. These are recognisable and easy to read, but they are not always enough. A learning coordinator building homogeneous groups, an HR manager comparing candidates’ written skills, or a training organisation managing language integration pathways — they all share the same need: a measurement that is precise, stable and easy to interpret.

This is exactly what we set out to build with ELAO. Behind a score like B1 (50) or B2 (25) lies a rigorous mechanism, designed by our team of language educators and validated against large-scale data. Here is how it works, and why it concretely changes the way results are used.

A percentage score, converted into levels

The first thing to understand is that we do not calculate a CEFR level directly. Our algorithm works with an internal score expressed as a percentage, on a scale of 0 to 100. Only then does a conversion grid translate this result into a position on the European framework.

This approach has an important implication: our system is not limited to the standard CEFR breakdown. Because we start from a continuous percentage, we can adapt the conversion grid to any institutional scale. For instance, we developed a bespoke framework for the Alliance Française in Atlanta with 35 to 36 sub-levels, including up to eight steps within a single level (B1.1, B1.2, B1.3… B1.8). This is simply not possible with tools that do not work from such a granular base.

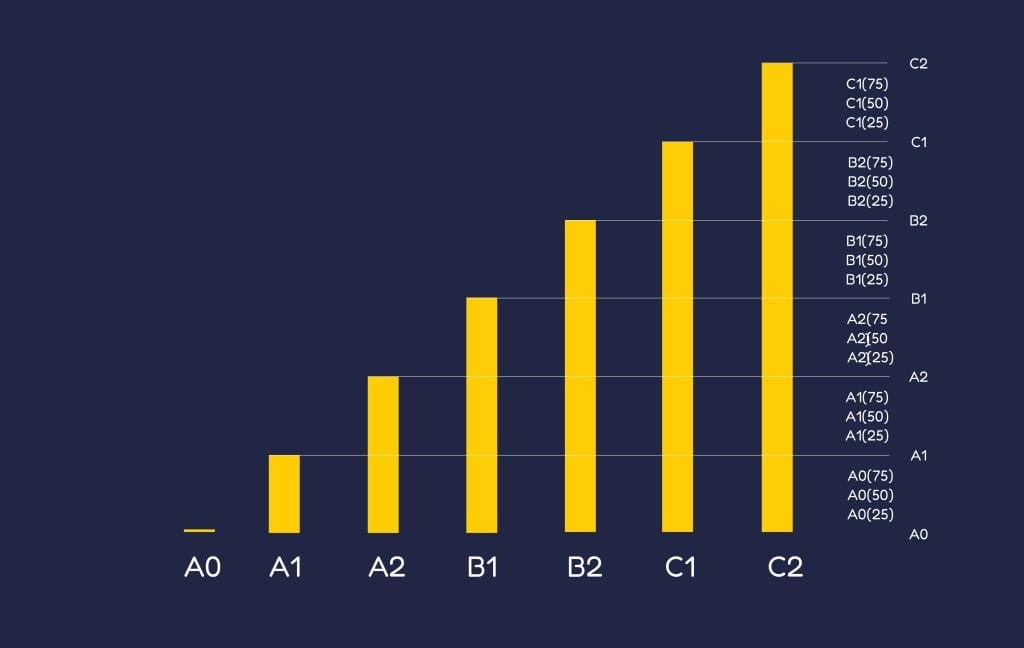

In its standard configuration, our test uses four sub-steps per CEFR level, represented by the suffixes 0, 25, 50 and 75. This is what we call the quarter-level score.

What the quarter levels actually mean

The numerical suffix that accompanies the level is not decorative. It indicates where the learner sits within that level — and this information is immediately actionable.

A score of 0 means the level has just been reached. The learner meets the minimum criteria for that level, but without much headroom. A score of 25 indicates a consolidated level: the foundations are solid and the candidate is comfortable in typical situations for that stage. A score of 50 places the learner halfway between their current level and the next: they have covered half the remaining ground. Finally, a score of 75 signals that the next level is almost within reach — this is what is often called a “strong level”, where a learner needs only a little more practice or exposure to move up.

This level of detail allows trainers and training managers to make far more nuanced decisions than a simple global level would ever permit.

How the algorithm builds this score in real time

The ELAO test is adaptive. This means the difficulty of questions adjusts in response to the answers given throughout the session. The system does not follow a fixed path: it draws from a bank of over 800 items and selects between 80 and 121 questions depending on the type of ELAO test (ELAO+, General, Business, Screening or Select).

With each answer, the algorithm refines the score in progress. A correct answer at a given difficulty level adds points; an incorrect one removes them. This mechanism of progressive addition and subtraction converges towards a stable percentage, which is then converted into a quarter-level score using the reference grid.

This is not a mark at the end of an exam: it is an estimate that sharpens with every question, much like a thermometer homing in on an accurate reading. As a result, the test can be relatively short while remaining precise, because it never asks unnecessary questions.

The four modules of the standard ELAO test

The final score does not come from a single dimension. We assess four distinct modules, the combination of which produces the overall result.

Grammatical structures measure the ability to handle syntax correctly and naturally. Active vocabulary evaluates the words a learner can draw on to produce content, whether written or spoken. Passive vocabulary tests word recognition in context — what a person understands without necessarily being able to produce. Finally, listening comprehension assesses the ability to decode spoken language across a variety of situations.

These four dimensions combined give a far richer picture than a traditional grammar test. And because they are measured separately, the detailed results can also identify uneven profiles — a learner who is strong in writing but less confident in listening, for example — which directly informs pedagogical choices.

ELAO+: our test with an additional spoken expression module

The standard test does not include oral production. This is a deliberate choice on our part, allowing us to guarantee a fully automated, reproducible assessment process that is free from examiner bias. However, for contexts where spoken skills are a decisive criterion — recruitment for communication-heavy roles, or admission to university programmes with a strong interpersonal dimension — we offer an extended version: ELAO+.

This module replicates an interaction close to what a candidate would experience with a human examiner. Questions are delivered by an avatar, making the experience feel more natural than a standard input interface. Recorded responses can then be scored automatically by AI or assessed manually by a trainer — both options are available, and the audio files are always accessible regardless of the method chosen.

The results of the spoken module are integrated directly into the final report, avoiding the need to juggle multiple documents and making the decision-making process more straightforward.

A noteworthy feature: A0 level detection

Most language tests mirror their scale on the CEFR, which officially begins at A1. We go one step further by identifying an A0 level, reserved for complete beginners who have not yet acquired the minimum foundations assessed by the European framework.

This matters in contexts where the test population is highly heterogeneous — newly arrived jobseekers, industrial staff with no prior language training, students at the very beginning of a programme. Overlooking profiles below A1 means returning a meaningless result to a portion of test-takers. Identifying them correctly means offering a relevant starting point — which is precisely what we aim to do.

How universities use the quarter-level score

Higher education institutions have developed very practical applications around the quarter-level score, particularly for managing international mobility.

At the University of Caen in Normandy, for instance, the ELAO score serves as an orientation criterion for outbound exchange applications. A student scoring below 50 may only apply for destinations that correspond exactly to their current level. A score of 50 or above, however, opens up a “tolerance” window: the student may apply for a destination requiring the next level up, on the assumption that the six months before departure will be enough to close the remaining gap.

This kind of rule is only made possible by the precision of the score. With a binary B1/B2 result, it is impossible to distinguish a strong B1 from a fragile one. With the quarter-level score, the decision becomes documented and defensible.

Score reliability: what the study with Le Forem tells us

Measuring precisely is valuable. Measuring reliably is what gives results credibility in institutional or HR contexts. We had our test externally validated through a study conducted in collaboration with Le Forem, the public employment and vocational training service of the Walloon Region in Belgium.

The protocol was straightforward: 18,026 tests were taken twice — once on our platform, and once through an assessment conducted by professional trainers. The goal was to measure the gap between the two results.

The study showed that in 86% of cases, the difference between the ELAO score and the trainer’s score was less than half a level. In other words, nine times out of ten, our automated test and a human assessor reach very similar conclusions — even without evaluating spoken production. This level of agreement places us among the most reliable tools on the market.

This reliability is not something we take for granted. Together with our partner Accent Languages, the training organisation behind ELAO, we administer over 1,500 tests per year to continuously monitor the consistency between human assessors and the platform. The test is a living tool, regularly benchmarked against real-world results.

What this means for HR and training professionals

For an HR manager, a quarter-level score offers something a global level simply cannot: the ability to compare candidates within the same level. Two profiles both displaying B2 are not equivalent if one sits at B2 (0) and the other at B2 (75). This distinction can make all the difference in a recruitment process where language is an operational requirement — international customer service, roles involving foreign partners, or managing multilingual teams.

For a training organisation, our score makes it possible to build genuinely homogeneous groups — something that broad CEFR levels do not always allow. A learner placed at B1 (75) does not have the same needs as a learner at B1 (0), and placing them in the same group holds one back while discouraging the other.

For a university, the granularity of the score simplifies both initial placement at the start of a programme and certification at its conclusion, with a level of traceability that holds up well with international academic partners.

An architecture designed by educators

What sets our scoring mechanism apart from a simple algorithm is the pedagogical layer underpinning it. Converting a percentage into a quarter-level score is not automatic: it is an expert decision, made by us as educators, with a precise understanding of how points are calculated and a deliberate choice of the conversion grid best suited to the target framework.

This interpretive work is what allows us to adapt to non-standard scales — such as the 35 sub-levels developed for the Alliance Française in Atlanta — without compromising the logic of the CEFR. At ELAO, technology serves pedagogical meaning, not the other way around.

If you would like to see how ELAO can fit into your language assessment processes — whether for university, HR or professional training needs — feel free to get in touch to discuss your context and objectives.